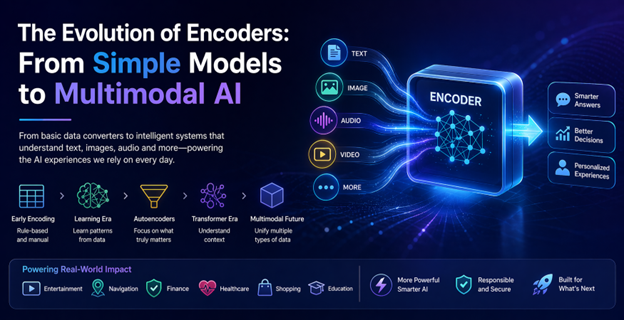

The evolution of encoders: From simple models to multimodal AI

When people talk about artificial intelligence, they usually focus on what it produces: Human-like text, stunning images, or eerily accurate recommendations. What rarely gets attention is how AI understands anything in the first place. That understanding begins with encoders. Think of an encoder as a translator that converts messy, real-world information into a structured language machines can work with.

Over time, encoders have quietly evolved from simple data converters into sophisticated systems capable of understanding multiple forms of information at once. This transformation didn’t happen overnight. It’s a story of gradual progress, practical challenges, and breakthroughs driven by real-world needs.

The beginning: When encoding was just a technical step

In the early days of machine learning, encoding was more of a technical necessity than an intelligent process. Developers had to manually decide how to represent data. If a system needed to understand categories like “small,” “medium,” and “large,” those labels had to be converted into numbers.

This worked, but only to a point. The system didn’t truly understand anything; it just processed numbers. For example, an early online store might recommend products based on basic categories, but it couldn’t grasp subtle relationships. Someone buying running shoes wouldn’t necessarily be shown fitness watches or hydration gear unless those links were explicitly programmed.

In short, early encoders handled data, not meaning.

Learning instead of being told

Everything started to change when neural networks entered the picture. Instead of relying entirely on human instructions, systems began learning patterns directly from data. Encoders became more than converters, they became learners.

Take image recognition as a real-world example. Instead of telling a system what defines a cat’s ears, whiskers, tail developers could train it on thousands of images. The encoder would gradually figure out patterns on its own. This change made AI far more adaptable and accurate.

The same idea applied to language. Words were not symbols; they became vector mathematical representations capturing meaning and relationships. That’s why modern search engines can understand that “cheap flights” and “budget airfare” are closely related, even though the wording is different.

Autoencoders: Finding what really matters

A major leap came with the introduction of autoencoders. These models were designed with a simple but powerful idea: compress data and then reconstruct it. To do this successfully, the encoder had to identify what truly mattered and ignore everything else.

This approach proved incredibly useful in real-world scenarios. In banking, for instance, autoencoders are used to detect fraud. By learning what “normal” behaviour looks like, they can quickly spot unusual transactions. If someone suddenly makes a high-value purchase in a different country, the system flags it not because it was told to, but because it learned that the behaviour is unusual.

Another everyday example is photo storage. When you upload images to a platform, encoders help reduce file size while keeping important details intact. That’s why images load quickly without looking heavily compressed.

The transformer Era: Context changes everything

The real turning point in encoder evolution came with transformer models. What made them different was their ability to understand context. Instead of processing information step by step, they look at everything at once and decide what matters most.

This is especially important in language. Consider the sentence: “She saw the man with the telescope.” Who has the telescope? Earlier models might struggle with this ambiguity. Transformer-based encoders, however, analyse the entire sentence and make a more informed interpretation.

This breakthrough powers many tools people use daily. When you interact with a chatbot, dictate a message, or translate text online, transformer encoders are working in the background. They make these interactions feel natural, not mechanical.

Encoders in everyday life

Today, encoders are everywhere, even if most people don’t realise it. They shape the way we interact with technology in subtle but powerful ways.

Streaming platforms use encoders to understand viewing habits. If you watch crime documentaries and psychological thrillers, the system doesn’t just categorise your interest, it learns patterns and suggests content that matches your taste more closely over time.

Navigation apps rely on encoders to process traffic data, road conditions, and user behaviour. That’s how they can suggest faster routes, sometimes even before congestion becomes obvious.

In healthcare, encoders assist doctors by analysing medical images. They don’t replace human judgement, but they can highlight areas of concern, helping professionals make quicker and more accurate decisions.

Multimodal encoders: Understanding more than one type of data

The latest evolution in encoders is perhaps the most exciting: multimodal ability. Instead of working with just one type of data, these encoders can process text, images and more at the same time.

This opens the door to experiences that feel far more natural. Imagine taking a photo of a plant and asking your phone how to care for it. A multimodal encoder can analyse the image, understand your question, and provide a useful answer in seconds.

Online shopping is another area seeing rapid improvement. Instead of typing a description, users can upload an image of a product they like. The system then finds similar items, combining visual recognition with contextual understanding.

This ability to connect different types of information is pushing AI closer to how humans experience the world.

Challenges that come with progress

As encoders become more powerful, they also become more demanding. Advanced models require computing resources, which can be expensive and energy-intensive. This raises important questions about sustainability and accessibility.

Bias is another concern. Since encoders learn from data, they can reflect existing inequalities. For example, if a system is trained on biased hiring data, it may unintentionally favour certain groups over others. Addressing this issue requires careful data selection and continuous oversight.

There’s also the matter of privacy. Encoders often process personal information, making data protection an important priority. Striking the right balance between innovation and responsibility is an ongoing challenge.

What lies ahead

The future of encoders is less about dramatic breakthroughs and more about refinement. Researchers are working on making models faster, more efficient, and less resource-heavy. This could make advanced AI tools accessible to smaller businesses and independent developers.

Personalisation is another area of growth. Encoders may soon adapt in real time, learning from individual users to deliver tailored experiences. In education, for example, systems could adjust content based on how a student learns best, making lessons more effective.

Multimodal systems will also continue to improve, blending different types of data more seamlessly. This could lead to more intuitive interfaces, where interacting with technology feels as natural as interacting with another person.

Conclusion: A quiet revolution with a big impact

Encoders may not be the most visible part of artificial intelligence, but they are among the most important. Their evolution from simple data converters to intelligent, multimodal systems has reshaped what machines can do.

What makes this journey interesting is how closely it mirrors real-world needs. Each advancement wasn’t just about better technology; it was about solving practical problems, understanding language, recognising images, detecting fraud, and improving everyday experiences.

As AI continues to grow, encoders will remain at its core, quietly transforming raw information into meaningful insight. They may work behind the scenes, but their impact is impossible to ignore.